Voices of CS: Shayan Hooshmand

The CS undergrad shares how he started doing research in the Speech Lab and won a Best Paper award at EMNLP 2021

Shayan Hooshmand grew up speaking Spanish, Persian, and English. Every time he switched between the three languages he felt like he was walking between social worlds and presenting a different version of himself. Hooshmand is also an actor and it is this same affinity for shapeshifting that underpins his theatre career. As he got older, he tried to learn more languages or at least more about as many languages as he could.

Once he got to Columbia, he was all set to study computer science (CS) and found himself gravitating toward natural language processing and speech processing because those research areas combined CS and linguistics. The prospect of teaching machines to understand language was interesting to him. Hooshmand decided to take Intro Linguistics, loved it, and added Linguistics as a double major.

When he came across Professor Julia Hirschberg’s Speech Lab and their research on emotion and charisma in speech, it caught his attention. He emailed Hirschberg every semester for three semesters until, in the spring of 2021, there was finally an opening to join one of her projects which he accepted immediately.

The research project he was assigned to focuses on automatically identifying humor in Facebook (FB) posts. The system the team created, Collecting Humor Reaction Labels, earned them a Best Paper award at a prestigious conference, Empirical Methods in Natural Language Processing (EMNLP 2021). We caught up with Hooshmand to learn more about what it takes to do research and win an award.

Q: What is CHoRAL and what were your findings?

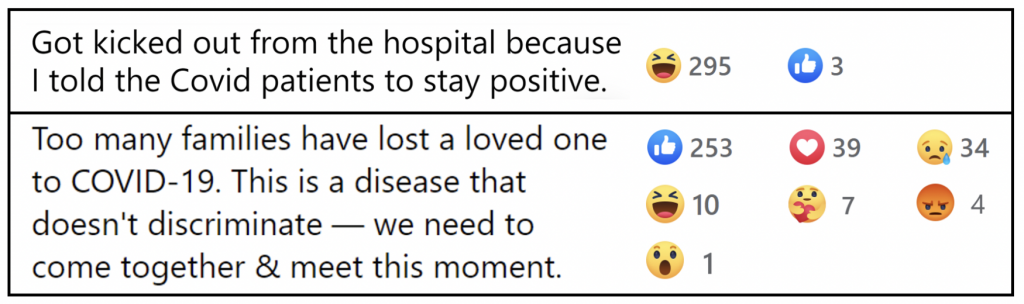

CHoRaL stands for Collecting Humor Reaction Labels, it is a framework for automatically scoring Facebook posts on humor. It presents two formulas, one to calculate a humor score, and one to calculate a non-humor score – each is based on the reaction distribution of a Facebook post.

Our paper presents both the framework and a dataset collected using the framework. The dataset contains about 800,000 COVID-related FB posts, each post was assigned humor and non-humor scores. Our goal for this framework and dataset is to enable future humor prediction research.

We validated our framework with experiments using several different BERT-based deep learning models. BERT is a machine learning model that learns contextual relations between words (or sub-words) in a text. We also ran lexico-semantic analyses on the humorous posts and non-humorous posts in our dataset to find some interesting patterns, like words related to space and time being negatively correlated with humor.

Q: How did this project come about? What was the process of deciding what to do?

The overall project didn’t have anything to do with humor immediately. The goal of this (ongoing) project is to detect fake and manipulated media online. As part of this larger goal, we thought it would be useful to automatically predict humor; then, if some piece of false information online is clearly humorous, we can attribute it to benign “fake news” and not anything malicious.

The pleasure of being an undergrad, though, is that I wasn’t fixated on these larger goals or the meetings with outside sponsors and collaborators that Professor Hirschberg and the PhD students attended. I got to focus on humor prediction and working directly with my PhD mentor, Zixiaofan Brenda Yang. Brenda came in with the brilliant idea to use Facebook humor reactions as a proxy for humor labels. At a high level, posts with more humor reactions would be more “humorous.” From there, the work became testing different methods to formalize this intuition.

Q: How much data did you have to work with? How was it processed?

So much data! We worked with millions of Facebook posts. We downloaded posts from CrowdTangle, a social media insights tool owned by Facebook. At first, we downloaded small batches of ~20,000 posts to test some of our formulas and definitions of humor and non-humor scores. In the end, we downloaded a couple of million Facebook posts and filtered them to keep only pure-text posts in English. We wrote the cleaning scripts in Python and ran them remotely on our lab computers.

Q: Were you prepared to work on it or did you learn as the project progressed?

It was a bit of a trial by fire for me since I was unaware of the standard proceedings and expectations surrounding academic research. For example, it shocked me that most of the “framing” work is done only when it comes time for paper writing. For a lot of the research process, you do not actually have a set, detailed idea of how you are going to present your work.

I also had to study technical concepts from information theory and statistics as we went along. Luckily, I always had my mentor, Brenda, as a guide.

Q: What were the things you already knew and what were the things you had to learn while working on the project?

I knew about the state-of-the-art deep learning models for NLP (RNNs, Transformers, etc.) and some other basic machine learning concepts. Naively, I thought my work would involve working with these models all the time, playing with their architecture, and tuning their hyperparameters.

In reality, I focused much more on data collection, preprocessing, and developing those formulas on humor and non-humor scores. Data collection and preprocessing were almost completely new to me (aside from some preprocessing functions I had written in previous CS classes) –– at least for our project, this work was fairly straightforward.

Developing the formulas required a lot of experimentation and reading up on technical concepts. I spent a couple of days trying to understand KL divergence conceptually before we tried to use it for an element of our project. In the end, it did not even end up being part of the paper. The cool thing, though, is that once I read up on that I ended up using it later in the project as the basis for calculating our non-humor score.

Q: Looking back, what were the skills that you wished you had before starting the project?

I wish I had a greater understanding of reading scientific papers, writing them, and the expectations for what kind of information from your project goes into them. There were many instances throughout the semester where Brenda had to remind me that we needed to be thorough with a certain decision or read up on previous work that might have faced the same problem because we would need to justify all our processes to the scientific community.

Q: Did working on this project make you want to change your research interests or focus?

The most interesting parts of the project for me were the linguistic analyses we ran on our humor-labeled data and writing the paper. I think that is because I like to approach things from more of a linguistics perspective, not as much pure computer science, so I am trying to direct my research from that angle more now.

Q: Will you continue to work on CHoRAL? Or are you working on something else now?

I have switched to a text-to-speech project in the Speech Lab this semester, so I’m not working on CHoRaL full-time anymore.

Q: Do you want to continue doing research and pursue a graduate degree?

I am definitely going to continue research throughout my undergrad years, and a PhD is possibly in my future. I am leaning toward working outside of academia for a few years after college and then applying to PhD programs later in my 20s.

Q: Would you recommend volunteering or seeking projects out to other students?

Of course! Even if you’re not interested in pursuing higher education, there’s so much to learn from a research environment. Particularly in the AI/ML space, doing research demystifies all the jargon and far-reaching statements about computer intelligence that you hear in the media. It is also a great way to gain practical skills, like keeping a project codebase organized and communicating the work I did independently with my mentors.

Q: Is there anything else that you think people should know about the project?

Not that I can think of right now, but if any students want to talk more about the project or undergrad research in general, my UNI is sh3988.