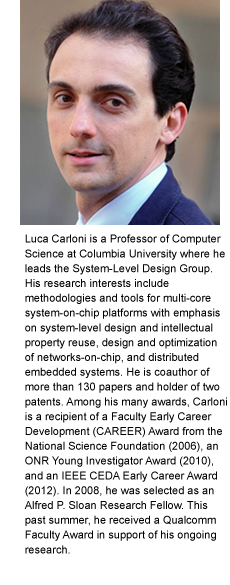

Luca Carloni on the coming engineering renaissance in chip design

![]() For decades, devicemakers, engineers, and others have been able to count on a new generation of chips coming to market, each more powerful than the previous—all courtesy of shrinking transistors that allow engineers to fit more on a chip. This is the famous Moore’s law. While a boon for technical innovation and a catalyst for entire new industries, the reliability of always having more powerful chips has had the opposite effect on chip architecture, deterring rather than spurring innovation. Why bother designing a whole new chip architecture when in two years a better chip using the traditional mold will be out? But as the expense vastly increases for each increase in transistor density, Moore’s law—more an objective than an actual law—is beginning to bump against physical limits, creating an opportunity and incentive for engineering researchers to again focus time and effort on new varieties of chips. In this interview, Luca Carloni discusses where he sees chip design to be heading, how his lab hopes to contribute to that redesign, and the changes he is making in the courses he teaches to train students for this new reality.

For decades, devicemakers, engineers, and others have been able to count on a new generation of chips coming to market, each more powerful than the previous—all courtesy of shrinking transistors that allow engineers to fit more on a chip. This is the famous Moore’s law. While a boon for technical innovation and a catalyst for entire new industries, the reliability of always having more powerful chips has had the opposite effect on chip architecture, deterring rather than spurring innovation. Why bother designing a whole new chip architecture when in two years a better chip using the traditional mold will be out? But as the expense vastly increases for each increase in transistor density, Moore’s law—more an objective than an actual law—is beginning to bump against physical limits, creating an opportunity and incentive for engineering researchers to again focus time and effort on new varieties of chips. In this interview, Luca Carloni discusses where he sees chip design to be heading, how his lab hopes to contribute to that redesign, and the changes he is making in the courses he teaches to train students for this new reality.

![]()

Jensen Huang, CEO of Nvidia, said earlier this summer that Moore’s law is dead. Is it?

Luca Carloni: You won’t ever get me to say that Moore’s law is dead—that’s something I first heard 20 years ago when still a student. Many years passed and every two years or so we have seen the arrival of a new generation of semiconductor technology. Sometime this year Intel is expected to ship advanced processors based on the company’s 10nm process technology.

What is over, however, is the economics aspect of Moore’s Law. Doubling the density of transistors can’t be done anymore without vastly increasing costs. Every jump in technology requires building a new fab [semiconductor fabrication facility], which can cost $5-10B. When there’s a ready market to buy up the newest chips—as there was when people first started buying laptops and smart phones—investing in a new fab makes sense. But it is not always easy to find a new product, or a new market, that brings billions of new chip sales. Still, I believe that these markets will continue to be discovered. There is a need for faster and better computers for autonomous cars, cloud computing, security, cognitive computing, robotics, etc.

We can’t get there just by shrinking transistors into tighter spaces and increasing their densities on chip because we can no longer dissipate the resulting power densities and heat. Now we have this fundamental tradeoff between two opposing forces: more computational performance versus higher power/energy dissipation.

How do you see this tradeoff being resolved?

By building specialized chips for different applications. One type of chip is not going to work for all applications. The system that’s best for the smart phone is going to be different than what’s best for the autonomous car, or cloud servers, or IoT [Internet-of-Things] devices. The performance-energy tradeoff is going to be different in each case.

Because of these conflicting demands, we are already seeing a migration from a homogeneous multicore architecture to heterogeneous SoCs [systems-on-chip] that contain specialized hardware. This consists of accelerators that perform a single function or algorithm much faster and more efficiently than software. Here the processor becomes just another component within a system of components. And the SoC becomes a more heterogeneous multicore architecture as it integrates many different components. Indeed, I expect that no single SoC architecture will dominate all markets. The set of heterogeneous SoCs in production in any given year will be itself heterogeneous!

We’re also seeing the rise of the field-programmable gate array, or FPGA, which can be programmed for different purposes. You’ll get better performance from an FPGA than from software and an FPGA is a much cheaper proposition that building a chip from scratch, but between an FPGA and a specialized chip there are orders of magnitude difference in energy efficiency and performance. So the demand for specialized chips will remain strong, but we need to design them faster and more cheaply while considering the target application: What algorithms or software will it run? Can it be done in parallel? How much energy-efficiency is required? There is no one right way to build a system anymore.

Heterogeneity is the emerging solution but heterogeneity increases the complexity of designing and programming chips. What you decide to put on a chip is just the beginning of a very complex engineering effort that spans both hardware and software.

What does this heterogeneity mean for computer engineering?

We need a new way of thinking about engineering chips. For too long, chip architects have been mostly on the sidelines as new faster chips based on traditional architectures continued to arrive every two years or so. But now there’s this chance to go back to design, to be creative again and come up with innovative architectures. It’s time for a renaissance in computer engineering, if you will, to think about things differently, to move, for example, from a processor-centric perspective to a system-centric perspective, where it’s necessary to think about how a change in one component affects the other components on the chip. Any change must benefit the whole system.

For handling complexity, we need to raise the level of abstraction in hardware design, similar to how it’s done in software. Instead of thinking in terms of bytes and clock cycles, we should think in terms of the behavior we’re aiming for and in terms of the corresponding data structures and tasks. As we do so, we need to reevaluate continuously the benefits and costs of doing things in hardware instead of software and vice versa. Which is the best design? It depends. Do you care more about speed? Or do you care more about power dissipation?

But it’s just not one thing. We need to think in terms of the entire infrastructure, from design and programming to fabrication. You think you have a better chip design, but can you program it and validate it—in conjunction with a system of heterogeneous components—before committing to the expensive manufacturing stage? This system-level design approach motivates the research in our lab here at Columbia.

We have developed the idea of Embedded Scalable Platforms, or ESP, that addresses the challenges of SoC design by combining a new template architecture and a companion system-level design methodology. With ESP, we are now able to realize complete prototypes of heterogeneous SoCs on FPGAs very rapidly. These prototypes have a very high degree of complexity for an academic laboratory.

To support the ESP methodology, we are developing some innovative tools. We have a new emulator for multicore architectures, which we presented at a conference last February—and we released the software as well—so we can describe a new machine and then run this emulator software on top of an existing multicore computer to see how the new architecture behaves when running complex applications. While this type of emulation has been done before—and across the different instruction sets such as Intel’s x86 and the ARM ISA—we have increased scalability so we can emulate a multicore machine on top of another multicore machine. And we made it possible to take advantage of the parallelism of the machines of today.

How have these changes affected how you teach chip architecture?

In my class on System-on-Chip Platforms, students learn to design complex hardware-software systems at a high level of abstraction. This means to design hardware with description languages that are closer to software, thus enabling faster full-system simulation and more effective optimization with high-level synthesis tools. During the first half of the semester students learn how to design accelerators with these methods. They also learn how to integrate heterogeneous components—accelerators and processors—into a given SoC, how to evaluate trade-off decisions in a multi-objective optimization space, and how to design components that are reusable across different systems and product generations.

In the second half of the semester, the students do a project that is structured as a design contest. They compete in teams to design an accelerator specialized for a certain function—one year it might be a computer vision algorithm, another year a machine learning task. They are given some vanilla code to start. They must optimize the code in different ways to design three competitive versions of the accelerator and write software to integrate them in the system and show that each runs correctly in our emulator. High-level synthesis allows each team to quickly evaluate many alternative design decisions. For example, if the algorithm requires that a multiplication is performed on two arrays of many elements, the students can decide to instance more multipliers to do many multiplications in parallel or, instead, to use fewer multipliers by performing these multiplications in time sharing. Basically, they experiment with the trade-offs of “computing in space” versus “computing in time.”

The main goal for each team is to obtain three distinct designs of the accelerator that correspond to three distinct trade-off points in terms of performance versus area occupation. The quality of each final implementation is evaluated in the context of the work done by the entire class. Throughout the one-month duration of the project, a live Pareto-efficiency plot reporting the current position of the three best design implementations for each team in the bi-objective design space is made available on the course webpage. This allows students to continuously assess their own performance with respect to the rest of the class.

Do you foresee further changes to your class?

Yes, always. Course content will never be static when the need for innovation is so great.

Last year we started complementing the competitive aspect with a collaborative aspect. We now partition the student teams in subsets. The teams in each subset compete on designing a given component but are now also asked to pair up their three designs with those designed by the teams in other subsets to get the final system. This further promotes the goal of designing components that are reusable under different scenarios and under different constraints. This is collaborative engineering, and increasingly how engineering is done in the real world today.

Every year, we change up the class by adding something new—different algorithms to implement, for example. Students love this, and in the process they learn one of the most beautiful ideas of engineering: evaluating situational complexity from multiple viewpoints and balancing multiple tradeoffs. It’s exactly what’s needed today in chip design if we are to sustain the level of innovation that we have seen over the past few decades.

Posted September 6, 2017