Columbia Gaze Data Set

Advancing gaze tracking research with more images than any other gaze data set.

Overview

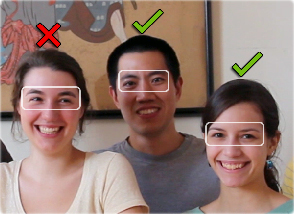

Gaze tracking and gaze locking have the potential to fundamentally change how we interact with the computers and devices around us. To work, however, it is critical for detectors to be accurate over many different gaze directions and head poses, and for a wide variety of potential users. The Columbia Gaze Data Set is a new benchmark for comparing different detectors: 5,880 images of 56 people over 5 head poses and 21 gaze directions, the largest publicly available gaze data set of its time.

Detailed Statistics

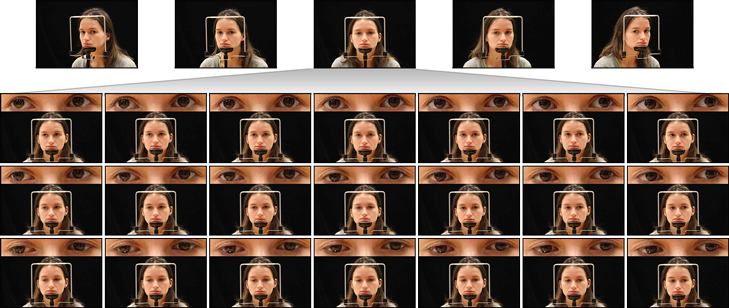

The data set contains a total of 5,880 high-resolution images of 56 different people (32 male, 24 female), and each image has a resolution of 5,184 × 3,456 pixels. 21 of its subjects are Asian, 19 are White, 8 are South Asian, 7 are Black, and 4 are Hispanic or Latino. Its subjects range from 18 to 36 years of age, and 21 of them wear prescription glasses.

For each subject, we acquired images for each combination of five horizontal head poses (0°, ±15°, ±30°), seven horizontal gaze directions (0°, ±5°, ±10°, ±15°), and three vertical gaze directions (0°, ±10°). Note that this means we collected five gaze locking images (0° vertical and horizontal gaze direction) for each subject: one for each head pose.

Collection Procedure

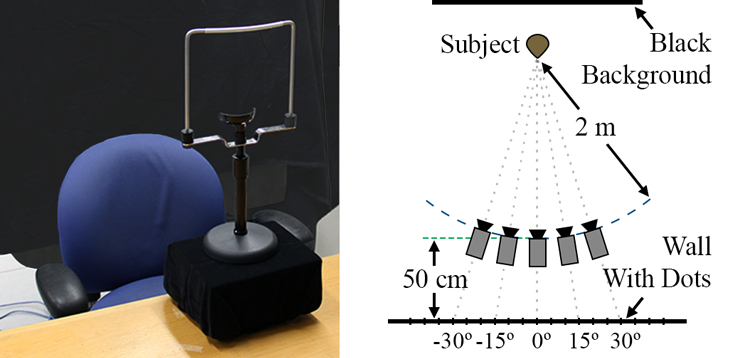

Subjects were seated in a fixed location in front of a black background, and a grid of dots was attached to a wall in front of them. The dots were placed in 5° increments horizontally and 10° increments vertically. There were five camera positions marked on the floor (one for each head pose), and each position was 2 m from the subject. The dots were organized in such a way that each camera position had a corresponding 7 × 3 grid.

The subjects used a height-adjustable chin rest to stabilize their face and position their eyes 70 cm above the floor. The camera was placed at eye height, as was the center row of dots. For each subject and head pose (camera position), we took three to six images of the subject gazing (in a raster scan fashion) at each dot of the pose's corresponding grid of dots. The images were captured asynchronously.

-

Gaze Locking: Passive Eye Contact Detection for Human–Object Interaction

Proceedings of UIST 2013[Acceptance Rate: 19.6%] Get PDF

Get PDF