Gaze Locking: Passive Eye Contact Detection for Human–Object Interaction

A technique for interacting with objects by looking at them.

Overview

Eye contact plays a crucial role in our everyday social interactions, but today's gaze-powered devices rely on gaze tracking (measuring the exact angle people are looking) rather than eye contact. That extra precision means that these systems require infrared lighting, calibration, and only work at close range. By focusing on gaze locking rather than gaze tracking, we can sense eye contact directly from an image, avoiding these limitations. It's a simpler, more natural means of interacting with computers, devices, and other objects.

Human–Object Interaction

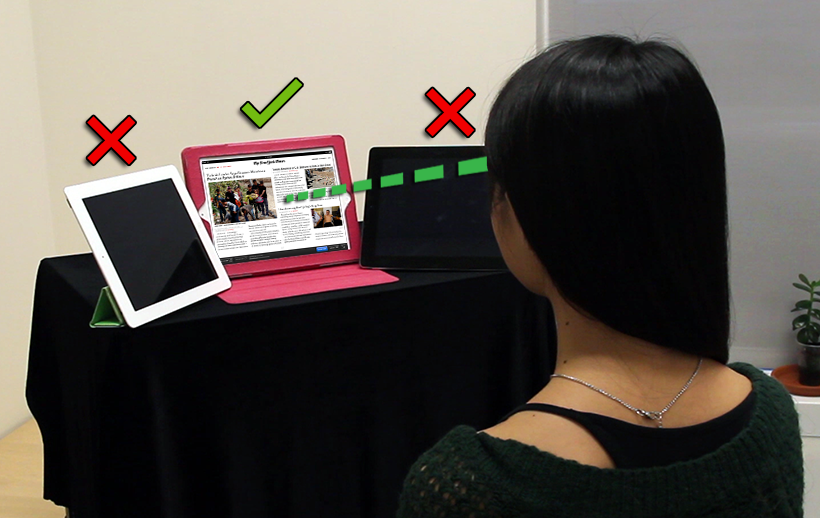

With our approach, you can interact with objects just by looking at them. In this proof of concept, we process the videos from the embedded cameras of three iPads to sense when the iPads are being looked at. Here, the woman is looking at the iPad in the middle. Since the iPads' cameras are on their extreme left, she was instructed to look at the iPads' left halves. The project video above shows our detector's output on the actual video feeds.

User Analytics

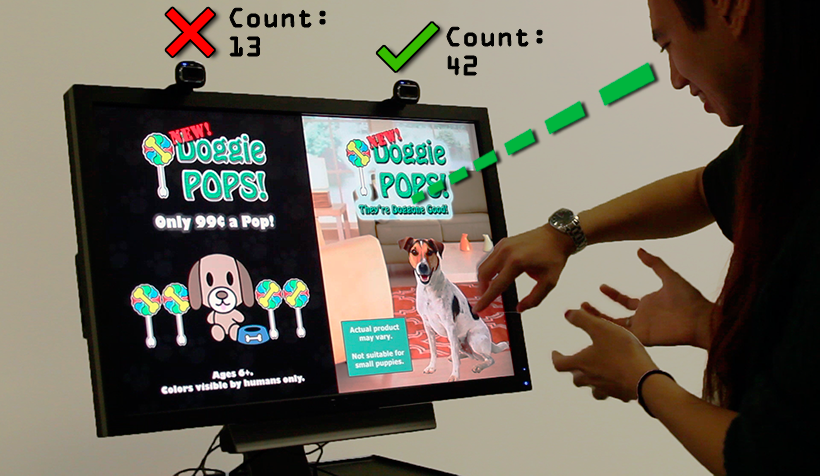

Two ordinary webcams are placed above two ads for the same product. By counting the number of times each advertisement is viewed, we can gauge which one is more effective. The counts incremented when the viewers looked at the ads' top halves. The project video above shows our detector's output on the actual video feeds.

Image Search Filter

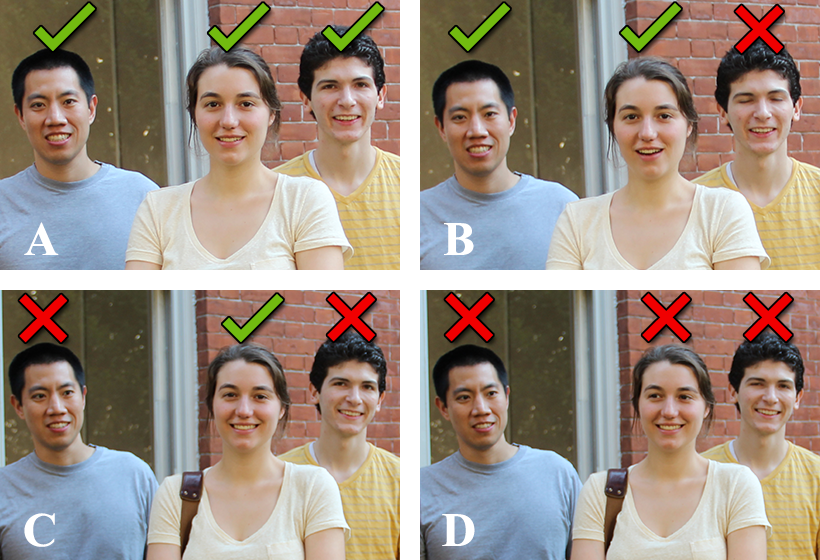

Our approach is completely appearance-based and can be applied to any image, including existing images such as ones from the Web. Hence, we can sort these images (A–D) by degree of eye contact to quickly find one where everyone is looking at the camera. These are actual decisions made by our detector.

Gaze-Triggered Photography

By incorporating a gaze locking detector in a consumer-level camera, the camera could automatically take a picture when the entire group is looking straight back, allowing the photographer to join the group and still capture a perfect photo. The project video above shows our detector's output on the camera's feed.

-

Gaze Locking: Passive Eye Contact Detection for Human–Object Interaction

Proceedings of UIST 2013[Acceptance Rate: 19.6%] Get PDF

Get PDF

ACM DL Page

ACM DL Page