Orthogonal polynomials play a central role in the area of approximation theory which in turn has played an important role in the development of fast algorithms. Low degree approximations to fundamental real valued functions allow us to speed up the computation of corresponding matrix-valued functions. The ability to compute such functions quickly underlies various modern spectral algorithms. In this talk, I will present this interplay between polynomials and algorithms.

Part of the talk will be based on a monograph with Sushant Sachdeva.The \varepsilon-approximate degree of a Boolean function is the minimum degree of a real polynomial that pointwise approximates f to error \varepsilon. Approximate degree has wide-ranging applications in theoretical computer science, from computational learning theory to communication complexity, circuit complexity, oracle separations, and even cryptography.

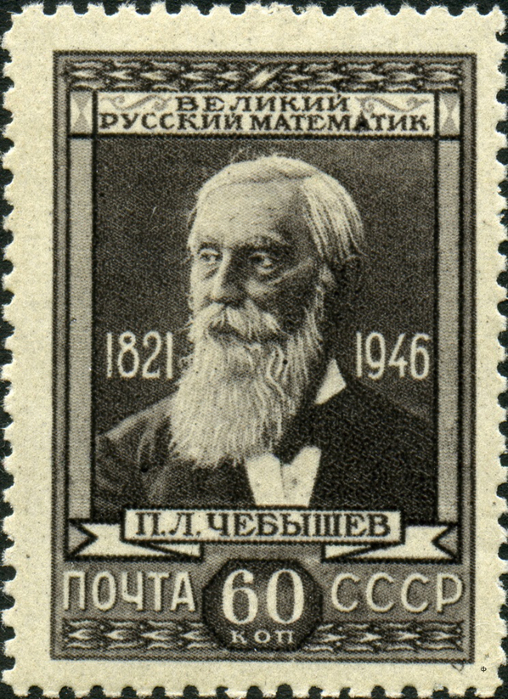

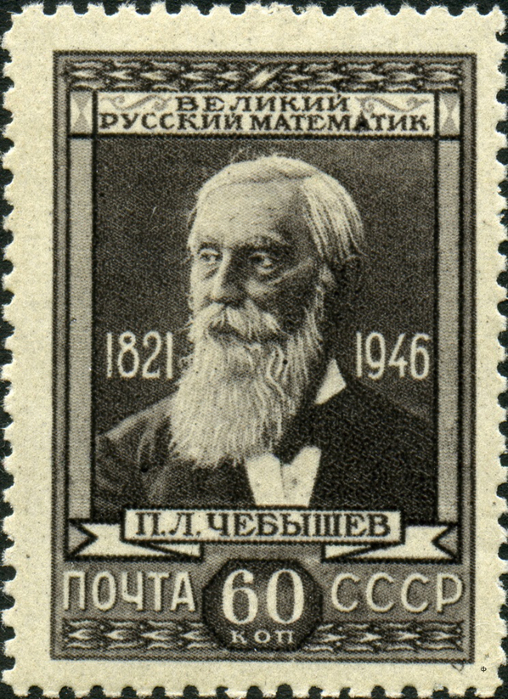

This talk will survey what is known about approximate degree and its many applications. I will start by describing optimal approximations for several fundamental classes of functions — these approximations underly many state of the art algorithms, and are often based on Chebyshev polynomials. I will then describe recent progress towards showing that these approximations are essentially optimal; these lower bounds have enabled striking progress on longstanding open problems, especially in communication complexity.

[slides] [video]Illustrated by examples from my work on statistical property estimation, we will explore the three main families of orthogonal polynomials: Chebyshev, Laguerre, and Hermite. These three classes of polynomials mimic aspects of rather different types of functions: Chebyshev polynomials mimic sines and cosines; Laguerre polynomials add aspects of exponential functions; and Hermite polynomials inherit properties from Gaussians, including being well-behaved under Fourier transforms. We explore how these perspectives provide a general toolkit for flexible polynomial constructions, showing both algorithmic upper and lower bounds derived from these techniques.

[slides] [video]