The AVENUE Project

Overview

The models are needed in a variety of applications, such as city planning, urban design, historical preservation and archaeology, fire and police planning, military applications, virtual and augmented reality, geographic information systems and many others. Currently, such models are typically created by hand which is extremely slow and error prone. AVENUE addresses these problems by building a mobile system that will autonomously navigate around a site and create a model with minimum human interaction, if any.

The entire task is complex and requires the solution of a number of fundamental problems:

- the creation of complete 3-D models of buildings and other large urban structures

- the fusion of range and image data

- the automated planning of new viewpoints

- the automated acquisition of range and image data

The modeling and view planning aspects have been addressed in the work of Ioannis Stamos --- a former member of our group.

The problem of the automated data acquisition is further decomposed into:

- a mobile platform to physically transport and operate the acquisition devices

- a software architecture for the mobile system

- localization components to determine the position and orientation of the platform

- a motion control component to control the motion of the platform

- path planning (by Paul Blaer)

- user interface (by Ethan Gold)

Mobile Platform

- a pan-tilt unit holding a color CCD camera for navigation and image acquisition

- a Cyrax laser range scanner with extremely high quality and 100m operating range.

- a GPS receiver working in a carrier-phase differential (also known as real-time kinematic) mode. The base station is installed on the roof of one of the tallest buildings on our campus. It provides differential corrections via a radio link and over the network.

- an integrated HMR-3000 module consisting of a digital compass and a 2-axis tilt sensor

- an 11Mb/s IEEE 802.11b wireless network for continuous connectivity with remote hosts during autonomous operations. Numerous base stations are being installed on campus to extend the range of network connectivity.

The robot and all devices above are controlled by an on-board dual Pentium III 500Mhz machine with 512MB RAM running Linux.

Software Architecture

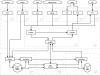

The main building blocks are concurrently executing distributed software components. Components can communicate (via IPC) with one another within the same process, across processes and even across physical hosts. Components performing related tasks are grouped into servers. A server is a multi-threaded program that handles an entire aspect of the system, such as navigation control or robot interfacing. Each server has a well-defined interface that allows clients to send commands, check its status or obtain data.

The hardware is accessed and controlled by seven servers. A designated server, called NavServer builds on top of the hardware servers and provides localization and motion control services as well as a higher-level interface to the robot from remote hosts.

Components that are too computationally intense (e.g the modeling components) or require user interaction (e.g the user interface) reside on remote hosts and communicate with the robot over the wireless network link.

Localization

The second method, called visual localization, is based on camera pose estimation. It is heavier computationally, but is only used when it is needed. When invoked, it stops the robot, chooses a nearby building to use and takes an image of it. The pose estimation is done by matching linear features in the image with a simple and compact model of the building. A database of the models is stored on the on-board computer. No environmental modifications are required.

Publications

-

Localization Methods for a Mobile Robot in Urban Environments

IEEE Transactions on Robotics, Vol.20, No.5, October 2004, pp.851-864.

(with Peter K. Allen)

-

Design, Implementation and Localization of a Mobile Robot for

Urban Site Modeling

PhD Thesis, Computer Science Department, Columbia University, New York, NY, November 2002.

(Advisor: Prof. Peter K. Allen)

-

Vision for Mobile Robot Localization in Urban Environments

In Proc. of the IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS'02), Lausanne, Switzerland, October 2002, pp.472-477.

(with Peter K. Allen)

-

AVENUE: Automated Site Modeling in Urban Environments

In Proc. of the 3rd Conference on Digital Imaging and Modeling (3DIM'01), Quebec City, Canada, May 2001, pp.357-364.

(with Peter Allen, Ioannis Stamos, Ethan Gold and Paul Blaer)

-

Design, Architecture and Control of a Mobile Site-Modeling Robot

In Proc. of IEEE Int. Conf. on Robotics and Automation (ICRA'00), San Francisco, California, April 2000, pp.3266-3271.

(with Peter K. Allen, Ethan Gold and Paul Blaer)