Visual Perspective Taking for Opponent Behavior Modeling

In order to engage in complex social interaction, humans learn at a young age to infer what others see and cannot see from a different point-of-view, and learn to predict others' plans and behaviors. These abilities have been mostly lacking in robots, sometimes making them appear awkward and socially inept. Here we propose an end-to-end long-term visual prediction framework for robots to begin to acquire both these critical cognitive skills, known as Visual Perspective Taking (VPT) and Theory of Behavior (TOB). We demonstrate our approach in the context of visual hide-and-seek – a game that represents a cognitive milestone in human development. Unlike traditional visual predictive model that generates new frames from immediate past frames, our agent can directly predict to multiple future timestamps (25 s), extrapolating by 175% beyond the training horizon. We suggest that visual behavior modeling and perspective taking skills will play a critical role in the ability of physical robots to fully integrate into real-world multi-agent activities.

Video

Paper - To appear at ICRA 2021.

Latest version: arXiv:2105.05145 [cs.CV].

Supplementary Material

We provide more implementation details in our Supplementary Materials.

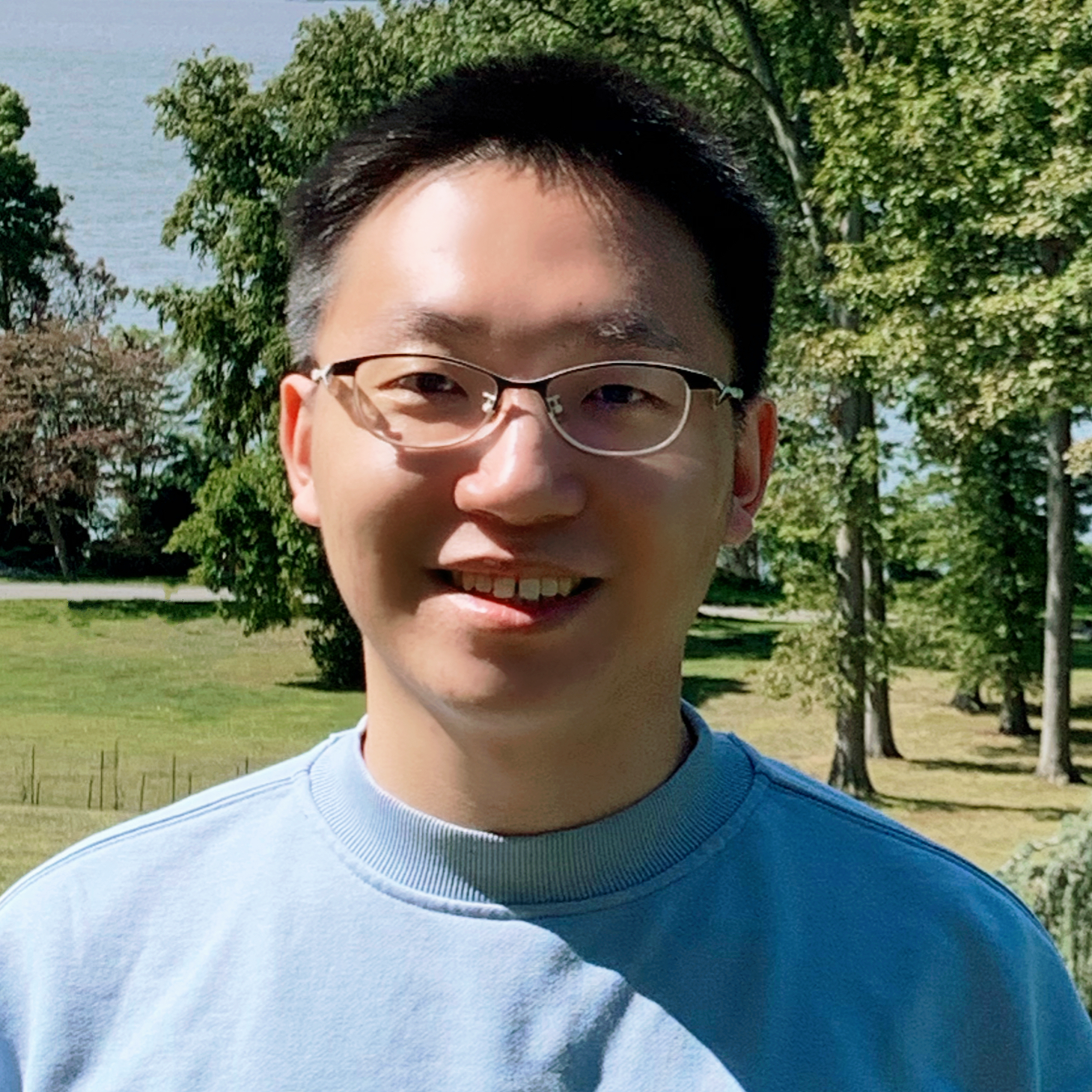

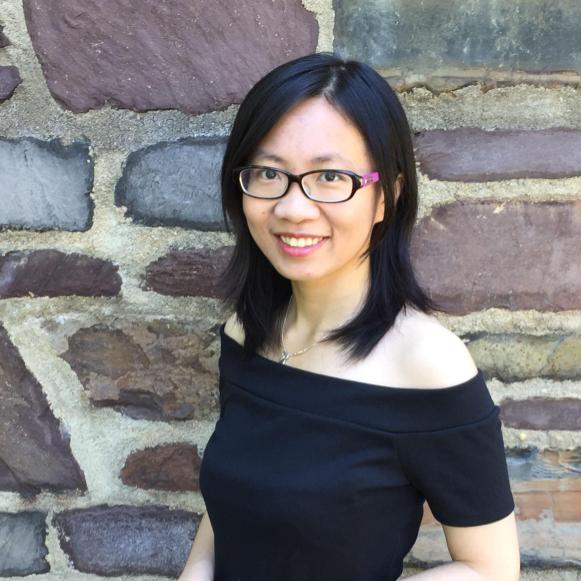

Team

Columbia University

Acknowledgements

We would like to thank Philippe Wyder and Carl Vondrick for their helpful discussions. This research is supported by NSF NRI 1925157 and DARPA MTO L2M Program grant HR0011-18-2-0020.

Contact

If you have any questions, please feel free to contact Boyuan Chen