The AVENUE Project

Supported in part by ONR/DARPA MURI award ONR N00014-95-1-0601 and

NSF grants CDA-96-25374, EIA-97-29844 and IIS -01-21239.

What is AVENUE?

AVENUE stands for Autonomous Vehicle for Exploration and Navigation

in Urban Environments. The goal of this project is to create an

autonomous system capable of building photo-realistic 3D geometrically

accurate models of outdoor sites. The system will be able to plan the

path to a desired viewpoint, navigate a mobile robot to this

viewpoint, acquire images and range scans of the targeted building,

and plan for the next viewpoint.

The development is split into smaller subprojects:

- The view planning and the model acquisition process are described

here.

- The mobile robot navigation system is described below

- A path planning system is described

here

- A comprehensive graphical user interface is also under development.

Click here to see a description. Click

here to see the latest development of

the UI project.

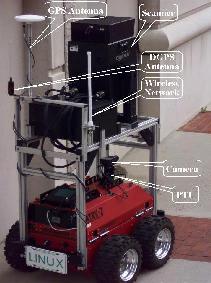

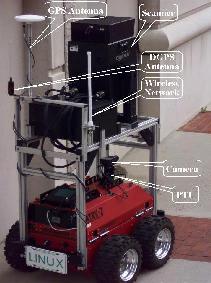

Our robot and accessories

click on the image to enlarge

|

The robot that we use is an ATRV-2 model manufactured by Real World Interface, Inc.. It has a

maximum payload of 100kg (220lbs) and we are trying to

make a good use of that. To the twelve sonars that come with the

robot we have added numerous additional sensors and periphery:

- A pan-tilt unit holding a color CCD camera for navigation and

image acquision

- A Cyrax laser range scanner with extremely high quality and the

unbelievable 100m operating range.

- A GPS receiver working in a carrier-phase differential

(also known as real-time kinematic) mode. The base station is

installed on the roof of one of the tallest buildings on our campus.

It provides differential corrections via a radio link and over the

network.

- A Wavelan wireless network for continous connectivity with remote

hosts during autonomous operations. Numerous base stations are being

installed on campus to extend the range of network connectivity.

The robot and all devices above are controlled by an on-board Pentium

II 300Mhz machine with 64 MB RAM running Linux.

|

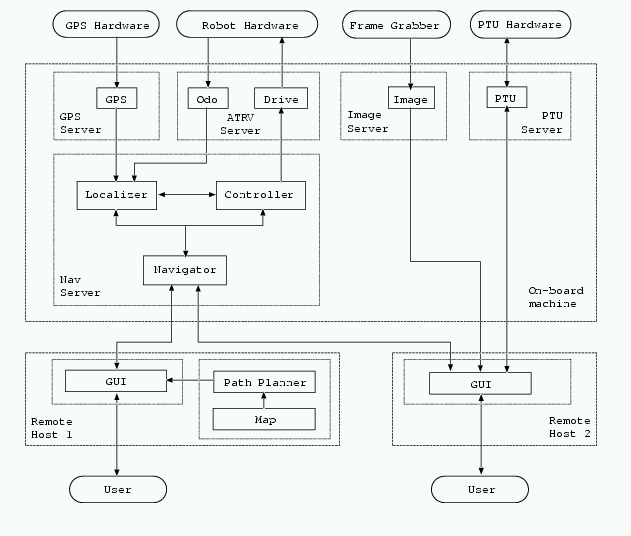

The navigation architecture

A major problem when designing a mobile robot

architecture is the distribution of computation. As with battery power,

payload, sensor range, and so on, computational resources are limited

and usually insufficient. This is especially true when it comes to

processing images or large-scale environmental models.

We believe that the correct approach is to distribute computation across

multiple computers. However, placing a number of computers on the robot is

not always desirable -- this would be at the price of reduced payload and

battery life, and is not scalable. A better solution is to

use a distributed wireless system that has the advantages of

providing theoretically unlimited computational and storage resources.

Our efforts are directed towards investigating this approach.

As a first step, we have designed the distributed object-oriented

architecture shown in the figure below.

It is based on Mobility -- a robot integration software

framework developed by RWI, Inc. In addition to helping us handle the

low-level interface with the robot, Mobility provides us with

components that are abstractions of various hardware pieces, such as

sensors and actuators. It is CORBA compliant which translates into

platform, operating system, and programming language independence.

The main building blocks of our system are Mobility components.

Each component is a stand-alone piece of code that performs a specific

task. For example, Odo provides odometric data from the robot

and Drive supplies low-level control commands to the robot.

Important components usually maintain a data structure (also a

component) that represents their state. Other components may query

this data structure about the current or a previous state (client pull

approach) or register with it to receive an automatic notification when

changes occur (a server push approach).

Components performing related tasks are grouped into servers. A server

is a multi-threaded program that handles an entire aspect of the

system, such as robot interfacing, navigation control and so on.

To ensure maximum flexibility, each hardware device is controlled by its

own server. The hardware servers are usually simple and serve three

purposes:

- insulate the other components from the low-level hardware details,

such as interface, measurement units, etc.

- provide multiple, including user-defined, views of the data coming

from the device (e.g. polar or cartesian coordinates)

- control the volume of the data flow, for example the rate at

which images will be grabbed.

Our hardware is controlled by four servers, that perform some or all

of the tasks above. The ATRVServer is the interface to the

robot's hardware and comes with the standard Mobility

distribution. It consists of several components that represent its

sensors and actuators or provide general robot-specific information,

such as shape and dimensions. Two components of main interest are

Odo, which provides position and velocity, and Drive,

which drives the robot.

The NavServer builds on top of the hardware servers and

provides a higher-level interface to the robot. A set of more

intuitive commands, such as ``go there'', ``establish a local

coordinate system here'', and ``execute this trajectory'', are

composed out of the low-level hardware control input. The server also

provides feedback on the progress of the current tasks. It consists

of three modules: Localizer, Controller, and

Navigator.

The Localizer is a part of the robot's control system that

performs data fusion. It obtains new readings from the odometry and

the GPS, registers them with respect to the same coordinate system,

and produces an overall estimate of the robot's position and velocity.

The Controller is a control module that brings the robot to a

desired pose. It executes commands of the type GOTO x,y and

TURNTO phi. Based on its target and the updates from the

Localizer, it produces pairs of desired rotational and angular

velocities that it feeds to the Drive component of the

ATRVServer.

The Navigator monitors the work of the Localizer and the

Controller, and handles most of the communication with the user

interface and other remote components. It accepts commands for

execution and reports the overall progress of the mission. It is

optimized for network traffic: it filters out the unimportant

information from the low-level components and provides a compact view

of the current system state to registered remote modules.

A mission consists of commands that are carried out sequentially. The

user specifies commands using the User Interface (details below) and

sends them to the Navigator for execution. Alternatively,

commands can be sent by any remote component. The Navigator

itself does not execute most of the commands -- it simply stores them

and resends them to the appropriate components, one at a time. It

monitors the progress of the current command and, if it completes

successfully, starts the next one. Additionally, a small group of

emergency commands exists, such as STOP, PAUSE, and

RESUME, that are processed immediately.

The commands stored in the Navigator are accessible to

other modules. This is useful in two ways:

- it allows users who have just connected to the robot to see what

it is trying to achieve and how much it has accomplished

- it allows the robot to continue its mission, even if the network

connectivity is temporarily lost. Moreover, this is the only way to

accomplish a mission that requires passing through a region not

covered by the network.