The n^2 Problem

At the Knight First Amendment Institute’s symposium on Disrupted: Speech and Democracy in the Digital Age, both Zeynep Tufekci and Tim Wu noted the problem of the concentration of news media, especially online. The trouble is that a fair amount of concentration more or less has to exist. The challenge is to figure out how to get the good effects without the bad ones.

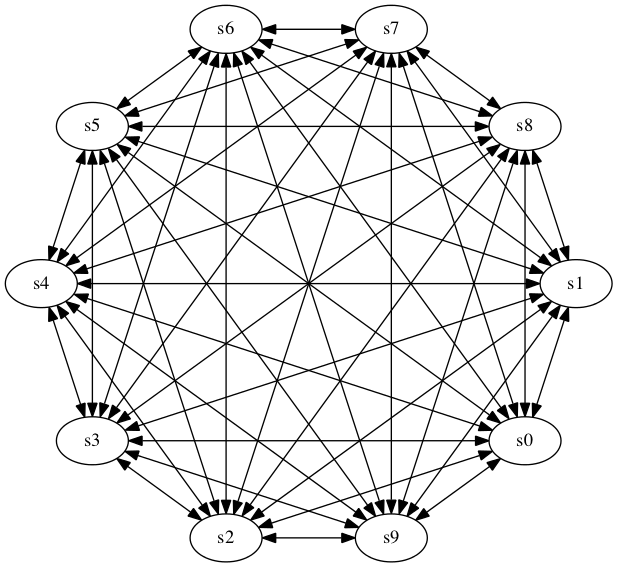

Let’s assume that there were no intermediaries. This means that everyone

can speak, but also that everyone has to monitor everyone else to know what’s

going on. This figure shows the patterns for 10 people:

Every arrowhead represents "listening". I’ll save you the trouble of counting; for 10 people, there are 90 arrowheads. More generally, for n people, there are approximately n² arrowheads. If we assume that there are 100,000,000 people in the US, any one of whom can make news (if only by being dragged off an airplane), there would be about 10,000,000,000,000,000 arrowheads—far too many!

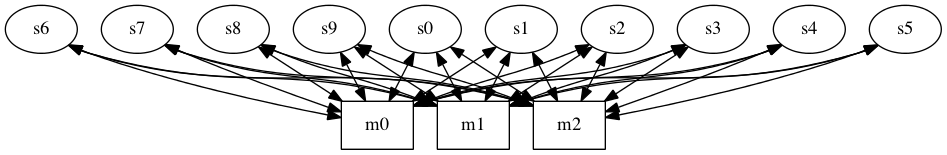

Assume, though, that in addition to the n people, there are m

media outlets. In that model, while the media sites have to listen to

all n people, each person only has to listen to m outlets.

There are many fewer communications channels here: m · n arrowheads. This is much more reasonable: for the same 100,000,000 people and, say, 1,000 media outlets, there are only 100,000,000,000 arrowheads, fewer by a factor of 100,000.

We give something up, of course. For one thing, the media outlets have

great power. A federal court

once noted

that

"the Internet has

achieved, and continues to achieve, the most participatory

marketplace of mass speech that this country—and indeed the

world—has yet seen." If we rely exclusively on the media,

only they participate.

They can be biased—we all know the litany of partisan and even

mendacious outlets.

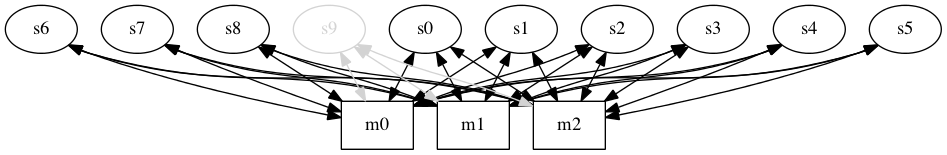

They may miss minor speakers, e.g., speaker s9 in this picture.

They may not even know about everyone—though they’ll probably do a better job than you will.

As Zeynep has pointed out, media outlets can also be "captured". That is, they’ll pay far too much attention to a minor, irrelevant, or downright false narrative, drowning out everything else.

Readers can get captured, too, by selecting only outlets that agree with their biases. This can interact with outlets (e.g., social media sites) whose only goal is financial: they show readers what they or their friends want, to keep them on-site. The result is the same: a "filter bubble".

So: pure decentralization can’t work, because its not scalable, and media outlets, whether traditional or new, are biased. What do we do?

An ideal solution will have several characteristics. First, it has to scale the way media outlets do, i.e., have m · n arrowheads. It has to avoid bias, whether intentional, out of unawareness, or due to filter bubbles. It has to accomodate differences in interest—I, for example, am much more interested in technology and tech policy news than I am in auto racing or fashion, and my news feeds should reflect that. It should be decentralized, to avoid dominance by any one source. Finally—and this is often overlooked—it needs a sustainable business model; large-scale newsgathering (to say nothing of competent reporting!) is expensive, and needs sources of support.

What’s the answer?

Physicality and Comprehensibility

Lately, I’ve been mulling the problem of comprehensibilty: given some mechanism, do people really understand what it does or how it works?

This isn’t an idle question. The programmers who created some system may understand it in detail ("may"? I’ll skip the details in this post, but in machine learning systems the reason for some behavior may remain obscure), but the public, both ordinary citizens who may be affected by it and the legislators and government officials who may wish to regulate it, probably do not. There are a lot of reasons why they don’t, but it’s not just lack of a programming background. Quite simply, what’s happening has no intuitive meaning—most people can’t sense what’s happening.

Contrast that physical objects: you can see how they move and interact. Certainly, there can be subtlety in a mechanical design, but you can poke it, watch it, often even decompose it.

A centrifugal speed governor is an interesting case in point.

Contrast that with the software equivalent. There will be convoluted code to read the speed sensor, complete with such unintuitive things as debouncing and a moving average of the last several samples, etc. Then there’s another complex section of code to move the actuator that controls the fuel valve; this code needs to know how fast to move the valve, perhaps more code to read the current position, etc. On top of all of that, there probably needs to be some hysteresis—at a rate that is itself software-defined—to prevent rapid changes in the speed as it "hunts" for the exact setting.

The greater comprehensibility of physical mechanisms matters even more for children—and we want them to understand the mechanisms around them, since understanding is a prerequisite to tinkering. Tinkering, of course, is how we all learn.

Now, understanding software has its own charms. Back when I was a working programmer, rather than a researcher, my strength was reading code rather than writing it—and I really loved doing it, even the code following this classic comment in 6th Edition Unix.

So what does all this mean? I’m certainly not suggesting that we give up software, or only use software with an obvious physical analog. I am suggesting that we need to pay more attention to making sure that everyone can understand how our software works.

Patching is Hard

There are many news reports of a ransomware worm. Much of the National Health Service in the UK has been hit; so has FedEx. The patch for the flaw exploited by this malware has been out for a while, but many companies haven’t installed it. Naturally, this has prompted a lot of victim-blaming: they should have patched their systems. Yes, they should have, but many didn’t. Why not? Because patching is very hard and very risk, and the more complex your systems are, the harder and riskier it is.

Patching is hard? Yes—and every major tech player, no matter how sophisticated they are, has had catastrophic failures when they tried to change something. Google once bricked Chromebooks with an update. A Facebook configuration change took the site offline for 2.5 hours. Microsoft ruined network configuration and partially bricked some computers; even their newest patch isn’t trouble-free. An iOS update from Apple bricked some iPad Pros. Even Amazon knocked AWS off the air.

There are lots of reasons for any of these, but let’s focus on OS patches. Microsoft—and they’re probably the best in the business at this—devotes a lot of resources to testing patches. But they can’t test every possible user device configuration, nor can they test against every software package, especially if it’s locally written. An amazing amount of software inadvertently relies on OS bugs; sometimes, a vendor deliberately relies on non-standard APIs because there appears to be no other way to accomplish something. The inevitable result is that on occasion, these well-tested patches will break some computers. Enterprises know this, so they’re generally slow to patch. I learned the phrase "never install .0 of anything" in 1971, but while software today is much better, it’s not perfect and never will be. Enterprises often face a stark choice with security patches: take the risk of being knocked of the air by hackers, or take the risk of knocking yourself off the air. The result is that there is often an inverse correlation between the size of an organization and how rapidly it installs patches. This isn’t good, but with the very best technical people, both at the OS vendor and on site, it may be inevitable.

To be sure, there are good ways and bad ways to handle patches. Smart companies immediately start running patched software in their test labs, pounding on it with well-crafted regression tests and simulated user tests. They know that eventually, all operating systems become unsupported, and they plan (and budget) for replacement computers, and they make sure their own applications run on newer operating systems. If it won’t, they update or replace those applications, because running on an unsupported operating system is foolhardy.

Companies that aren’t sophisticated enough don’t do any of that. Budget-constrained enterprises postpone OS upgrades, often indefinitely. Government agencies are often the worst at that, because they’re dependent on budgets that are subject to the whims of politicians. But you can’t do that and expect your infrastructure to survive. Windows XP support ended more than three year ago. System administrators who haven’t upgraded since then may be negligent; more likely, they couldn’t persuade management (or Congress or Parliament…) to fund the necessary upgrade.

(The really bad problem is with embedded systems—and hospitals have lots of those. That’s "just" the Internet of Things security problem writ large. But IoT devices are often unpatchable; there’s no sustainable economic model for most of them. That, however, is a subject for another day.)

Today’s attack is blocked by the MS17-010 patch, which was released March 14. (It fixes holes allegedly exploited by the US intelligence community, but that’s a completely different topic. I’m on record as saying that the government should report flaws.) Two months seems like plenty of time to test, and it probably is enough—but is it enough time for remediation if you find a problem? Imagine the possible conversation between FedEx’s CSO and its CIO:

"We’ve got to install MS17-010; these are serious holes.""We can’t just yet. We’ve been testing it for the last two weeks; it breaks the shipping label software in 25% of our stores."

"How long will a fix take?"

"About three months—we have to get updated database software from a vendor, and to install it we have to update the API the billing software uses."

"OK, but hurry—these flaws have gotten lots of attention. I don’t think we have much time."

So—if you’re the CIO, what do you do? Break the company, or risk an attack? (Again, this is an imaginary conversation.)

That patching is so hard is very unfortunate. Solving it is a research question. Vendors are doing what they can to improve the reliability of patches, but it’s a really, really difficult problem.

Who Pays?

Computer security costs money. It costs more to develop secure software, and there’s an ongoing maintenance cost to patch the remaining holes. Spending more time and money up front will likely result in lesser maintenance costs going forward, but too few companies do that. Besides, even very secure operating systems like Windows 10 and iOS have had security problems and hence require patching. (I just installed iOS 10.3.2 on my phone. It fixed about two dozen security holes.) So—who pays? In particular, who pays after the first few years when the software is, at least conceptually if not literally, covered by a "warranty".

Let’s look at a simplistic model. There are two costs, a development cost $d and an annual support cost $s for n years after the "warranty" period. Obviously, the company pays $d and recoups it by charging for the product. Who should pay $n·s?

Zeynep Tufekci, in an op-ed column in the New York Times, argued that Microsoft and other tech companies should pick up the cost. She notes the societal impact of some bugs:

As a reminder of what is at stake, ambulances carrying sick children were diverted and heart patients turned away from surgery in Britain by the ransomware attack. Those hospitals may never get their data back. The last big worm like this, Conficker, infected millions of computers in almost 200 countries in 2008. We are much more dependent on software for critical functions today, and there is no guarantee there will be a kill switch next time.The trouble is that n can be large; the support costs could thus be unbounded.

Can we bound n? Two things are very clear. First, in complex software no one will ever find the last bug. As Fred Brooks noted many years ago, in a complex program patches introduce their own, new bugs. Second, achieving a significant improvement in a product’s security generally requires a new architecture and a lot of changed code. It’s not a patch, it’s a new release. In other words, the most secure current version of Windows XP is better known as Windows 10. You cannot patch your way to security.

Another problem is that n is very different for different environments. An ordinary desktop PC may last five or six years; a car can last decades. Furthermore, while smart toys are relatively unimportant (except, of course, to the heart-broken child and hence to his or her parents), computers embedded in MRI machines must work, and work for many years.

Historically, the software industry has never supported releases indefinitely. That made sense back when mainframes walked the earth; it’s a lot less clear today when software controls everything from cars to light bulbs. In addition, while Microsoft, Google, and Apple are rich and can afford the costs, small developers may not be able to. For that matter, they may not still be in business, or may not be findable.

If software companies can’t pay, perhaps patching should be funded through general tax revenues. The cost is, as noted, society-wide; why shouldn’t society pay for it? As a perhaps more palatable alternative, perhaps costs to patch old software should be covered by something like the EPA Superfund for cleaning up toxic waste sites. But who should fund the software superfund? Is there a good analog to the potential polluters pay principle? A tax on software? On computers or IoT devices? It’s worth noting that it isn’t easy to simply say "so-and-so will pay for fixes". Coming up to speed on a code base is neither quick nor easy, and companies would have to deposit with an escrow agent not just complete source and documentation trees but also a complete build environment—compiling a complex software product takes a great deal of infrastructure.

We could outsource the problem, of course: make software companies liable for security problems for some number of years after shipment; that term could vary for different classes of software. Today, software is generally licensed with provisions that absolve the vendor of all liability. That would have to change. Some companies would buy insurance; others would self-insure. Either way, we’re letting the market set the cost, including the cost of keeping a build environment around. The subject of software liability is complex and I won’t try to summarize it here; let it suffice to say that it’s not a simple solution nor one without significant side-effects, including on innovation. And we still have to cope with the vanished vendor problem.

There are, then, four basic choices. We can demand that vendors pay, even many years after the software has shipped. We can set up some sort of insurance system, whether run by the government or by the private sector. We can pay out of general revenues. If none of those work, we’ll pay, as a society, for security failures.